A few weeks ago, I have written about some issues I have experienced with software in recent time, prompted by issues with Zotero after their 8.0 update. But there is a second dimension to security and trust in software: Software – especially Open Source – is currently bombarded with “security advisories” from left and right. I think that’s bad. Not because I want software to suddenly become less secure, but because this has – at least for me – led to some serious issues that I believe are crucial to address.

Trusting Software

To quickly recap the primary point I made with my previous article: Yes, we still can trust software. But we should be cautious, because the various recent issues are likely aggravated by the lenient use of GPT models in writing code. In a few years, software might be less stable than we would like. The main point is that some software developers appear to be less concerned with stability than with the mindless addition of AI features out of fear to be “left behind.” This includes some very large software companies upon which millions of people rely.

Today’s article is about the other side: the developers. Because not just users need to trust their software. Developers need to trust the software they use, too. They need to trust in their ecosystem. And I have the gut feeling that this trust is beginning to crumble.

Nota bene: I am not talking about the constant issues with package managers that get bombarded with malware. That has been discussed at length elsewhere. This is a related phenomenon, but not the primary focus of this article.

Security Advisories

The point of departure for this argument is an observation I made in late March. After a few days off from developing in order to finish an R&R and do other research-related activities, I took a few hours on a weekend to clean up some of the piled up work in the Zettlr repository. While doing that, I noticed a disturbing amount of security advisories on the app.

If you don’t know what I’m talking about: A security advisory is basically a notification which developers (especially on GitHub) get that some of the libraries they depend on have critical vulnerabilities. If you’re not developing software, you have likely never seen one, because they get distributed only via private channels to developers directly. The basic idea: if they are being treated as confidential, developers have a bit more time to patch these vulnerabilities before they become public knowledge. Because as soon as they are, malicious actors will be able to exploit them.

So in principle, these advisories are great. And usually, they are easy to fix. For most of them, GitHub’s own management system, “dependabot,” is able to produce automated changes that can be included in the code base with a single click. Sometimes, however, it’s more difficult. And then you have security advisories open on your own app that you can’t really close.

I mean, you could (by marking them as “not applicable”), but I’m convinced that getting into the habit of dismissing advisories can set a bad precedent. Instead, I prefer to keep them open until the corresponding developers have fixed them. Which leaves a bad taste. Right now there are about a dozen security advisories open on Zettlr, none of which I am able to close without wreaking havoc on the entire build process.

Cognitive Overload

But that is not even the main issue. There were always advisories I couldn’t close. There was always something security-critical happening. So is life. The main issue is the frequency with which this happens right now. A few months ago, Google made the news with a GPT-model that allegedly had found twenty security vulnerabilities completely autonomously. That was in August of last year. By now, such pipelines are likely capable of much higher speeds. They automatically sift through repositories and flag anything that’s not as tight as an airlock on the ISS.

This also applies to security researchers: Even if security researchers don’t deploy a fully automated system to find security vulnerabilities, they likely employ the help of AI to find new issues. All in all, it appears to me that the speed of new security vulnerabilities being reported outpaces the speed of developers fixing them.

And this is a problem. Because developers – especially those that do it in their spare time – have limited capacity to react to this quantity of reports. It feels a bit like fixing a breaking dam.

There is a good point to be made here: malicious actors likewise employ AI systems to find vulnerabilities faster. So the argument goes: if a security AI finds an exploit, we can expect that a malicious AI will find the same one, and possibly already has. So we better open up reports to ensure that those are at least known and the developers can work through them.

However, that’s not how humans work. I have noticed a significant reduction in the alertness I have towards security vulnerabilities. When I see a new report that can’t be fixed right away, I am becoming more and more likely to dismiss this. Then I forget about it until the report closes itself because someone on the other side of the globe has finally updated their dependencies. And this is bad, because with the influx of non-critical security advisories, the actually severe ones are more likely to slip through, because there is nothing inherent to distinguish them from the less severe.

Loss of Context

This is compounded by a significant loss of context in security reports. There are security advisories, and there are security advisories. I have not yet seen a security advisory that didn’t point out an actual issue. But, and this is the crucial difference: I have seen plenty advisories that are simply not critical in a certain context.

When I started writing software, I was very afraid of receiving such advisories, because I was afraid that someone could do bad stuff with it. It took a few years, until a good friend mentioned an instance where there was a security vulnerability open on some software package that hasn’t been fixed for over a decade. And that with good reason: It complained about a piece of code being insecure that was working as intended. I don’t remember which software it was, but this was eye-opening.

A truly secure piece of software is one that doesn’t do anything. Every piece of software is inherently insecure. Because if it didn’t do things that may become problematic, it would not be of much use to us. There are nuances here, so let me use an example.

Zettlr allows remote code execution. That’s usually a red flag for every security-minded person. But not for Zettlr. Why? Because that scary-sounding “remote code execution” essentially just means that Zettlr uses Pandoc to bind together some HTML file with MathTeX downloaded from a CDN server. The MathTeX library is effectively code that comes from somewhere else and that could do anything on your computer. And yes, Zettlr could block that. But that would also mean depriving its users of much of the perks during export.

It is this context that is hugely important. Most still-open security advisories on the Zettlr repository are related to software that are specified as development dependencies. Which means, they never make it in the final app. Rather, they are only used while making the app. Which, in turn, means that this software will be executed primarily by people with strong knowledge about the pitfalls of JavaScript code and who know what could go wrong.

An Example

Let’s look at one such advisory together, shall we?

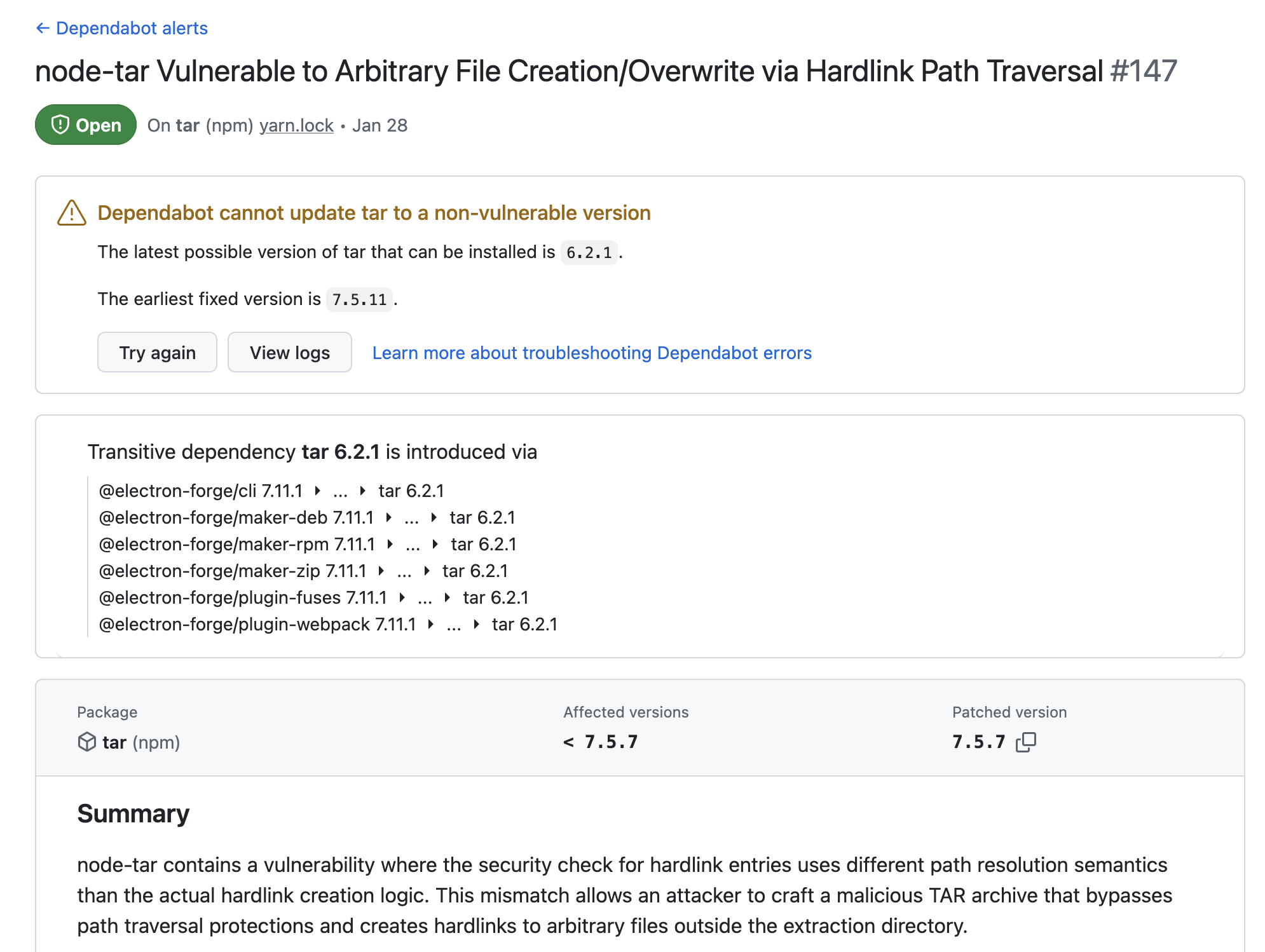

The screenshot shows one currently open security advisory on the Zettlr repository: “node-tar Vulnerable to Arbitrary File Creation/Overwrite via Hardlink Path Traversal.” A few things to note here:

- GitHub mentions that it cannot automatically update the dependency to a non-vulnerable version.

- The package is pulled in via a lot of different packages.

- If exploited, attackers can supposedly essentially read any file on a server.

Sounds scary, right? Well, certainly. There’s just one issue: the chances of this actually happening are closer to zero than to one percent. Now, don’t get me wrong: This could still be exploited. But in the particular context in which the vulnerable code is used here, it’s negligible. Let me tell you why:

- The good news: Users of Zettlr aren’t affected, because that code never ends up in the binary. It is only used during development.

- More specifically,

taris used during development to download assets necessary to build the application. - The archives that are being fetched are known, and a malicious actor cannot supply a link to their own, malicious archive.

- Last but not least: This advisory assumes that the code is executed with a user that can do anything. Further below in the advisory is a table with potential effects of this. One line is instructive: “User Creation:

/etc/passwd(if running as root) → Add new privileged user.” Think about this: only on a server where the node user is root could this become a problem. Essentially, the advisory therefore assumes that one node-package must offset all the bad decisions of someone setting up a server.

That is what I mean with “context matters.” It is absolutely an exploitable vulnerability. But only in very narrow and specific contexts. That is the reason this hasn’t yet been fixed by the maintainers of @electron-forge.1 But: it sows some doubts about how safe the software really is that I use to build software.

This is a pattern I observe frequently: Someone reports a potential issue, but when thinking about this for more than ten minutes, it’s clear that it’s not actually an issue. Many security reports – especially in recent months – have started to look like someone opened a 101 book on secure software design and wrote a dumb script that reports these patterns whenever it finds them. To make matters worse, this approach then can even miss things that malicious actors can actually exploit, because it only looks for “exploitable-looking” patterns. And this noise can drown out real issues, where assumptions are violated and which can actually hurt users.

Missing the Obvious

One final example. A few weeks ago, I received an email about a potential security vulnerability in Zettlr. I was very happy, because the email looked thorough and verbose. It included code snippets showing the vulnerable code, how it could be done, and proposed a fix. Unfortunately, none of that was relevant.

First, while the mentioned code had a weakness in the past, a few days before I received the email I actually fixed what they reported. Moreover, that fix to the vulnerability was even contained in the code snippets the email included to show supposedly vulnerable code! So someone just copied the code from somewhere without double-checking it.

But secondly, and much more importantly: They completely overlooked another place in which I have made the same mistake. After reviewing the report, I found that other place, and fixed that as well. This report broke something. Because it showed me that I cannot rely as much on security researchers as I possibly wanted to.

I am still fighting with reports of possibly vulnerable code that isn’t a vulnerability in our context, because it is expected that it can do certain things that – in other contexts – would indeed constitute a vulnerability. This lack of consideration for context drives me mad.2 Because I take every report seriously – because there could be a severe issue hidden under a pile of nonsense.

Final Thoughts

Possibly severe vulnerability reports are currently being buried under mountains of semi-relevant reports that require so much context that being hit by a piano on the street sounds more likely. And this has real consequences. cURL has stopped their bug bounty program. And I have seen it necessary to add the following clause to Zettlr’s security notes:

We take every security-related notification seriously, will read through them, and respond to them. If we determine that you have reported expected behavior, we will indicate that in our response to you. In this case, you may not open a CVE (see our security protocol below). If we further have strong reason to believe that your notification has been made in bad faith, we take the liberty to fully ignore your report or even take action depending on the situation. In such or similar cases, we affirm our legal right(s). Zettlr is a collaborative effort that only works if everyone works together, and we will defend this.

So, long story short: With security vulnerabilities it’s like with everything else in the world; too much of it can also kill you. We need much less security vulnerabilities, and much fewer people trying to become security researchers by reporting semi-relevant stuff just to get a CVE under their belt. We need to critically re-evaluate what constitutes a security vulnerability, and under which circumstances, and we need to increase the amount of “low” and “medium” advisories. Because no, just because you found unsanitized HTML code doesn’t mean it’s “critical.”

Y’all need to chill the f*** down.

If you want to hear a silly joke: yes, Electron forge is maintained by Microsoft. ↩

What also drives me mad is that every such advisory has a severity attached to it, which can be low, medium, high, or critical. 99% of all reports I receive are marked as “high” or “critical.” I’m sorry, but no. If every security problem is high or critical, then none is high or critical. ↩